What Is an AI Agent, Really?

Why an agent is just an LLM with tools

Introduction

We have all heard the term AI agent everywhere lately.

But what actually is an agent?

Is it just an LLM?

Is it something more?

Is ChatGPT an agent?

Is Claude Code an agent?

What is an LLM

Before we talk about agents, we need to understand the part at the center of them: the LLM.

LLM stands for Large Language Model.

At the most basic level, an LLM is a system trained to predict the next piece of text based on what came before it. In simple terms, it is very good at continuing language in a useful way.

If you give it:

“How are ...”

it will likely continue with:

“you?”

because that is a common pattern in language.

That same mechanism scales up to much more impressive things.

If you ask:

“Generate a tomato soup recipe”

it can produce a believable and often useful recipe. It is not actually cooking. It is not tasting the soup. It is not retrieving a recipe card from a shelf in its brain. It is generating text based on patterns it has learned during training.

That is why LLMs can be incredibly helpful, but also why they can sometimes sound confident while being wrong. They are optimized to generate plausible language, not to guarantee truth.

So if you want the simple definition:

An LLM is the language engine.

It reads your input, interprets it, and generates a response.

That makes it powerful. But on its own, it is still limited.

What about reasoning or “thinking” models?

Some models are better than others at working through harder problems.

When people talk about “thinking” or “reasoning” models, what they usually mean is that the model is better at handling multi-step tasks before producing its final answer. It may spend more effort planning, comparing options, breaking problems into steps, or noticing missing information.

So instead of jumping directly to an answer, it may first work through things like:

what the user is really asking

what information is missing

what steps would solve the task best

whether it should ask a clarifying question

For example, if you ask for a tomato soup recipe, a stronger model may realize that you did not mention serving size, ingredients you want to avoid, or whether you want a quick recipe or a richer one.

That does not mean it thinks exactly like a human. But it does mean it can often reason through a task more effectively before answering.

So now we have a useful mental model: an LLM is a language model that predicts the next piece of text.

What is an Agent

To understand what an agent is, we have to first understand what Tools are.

What is a Tool

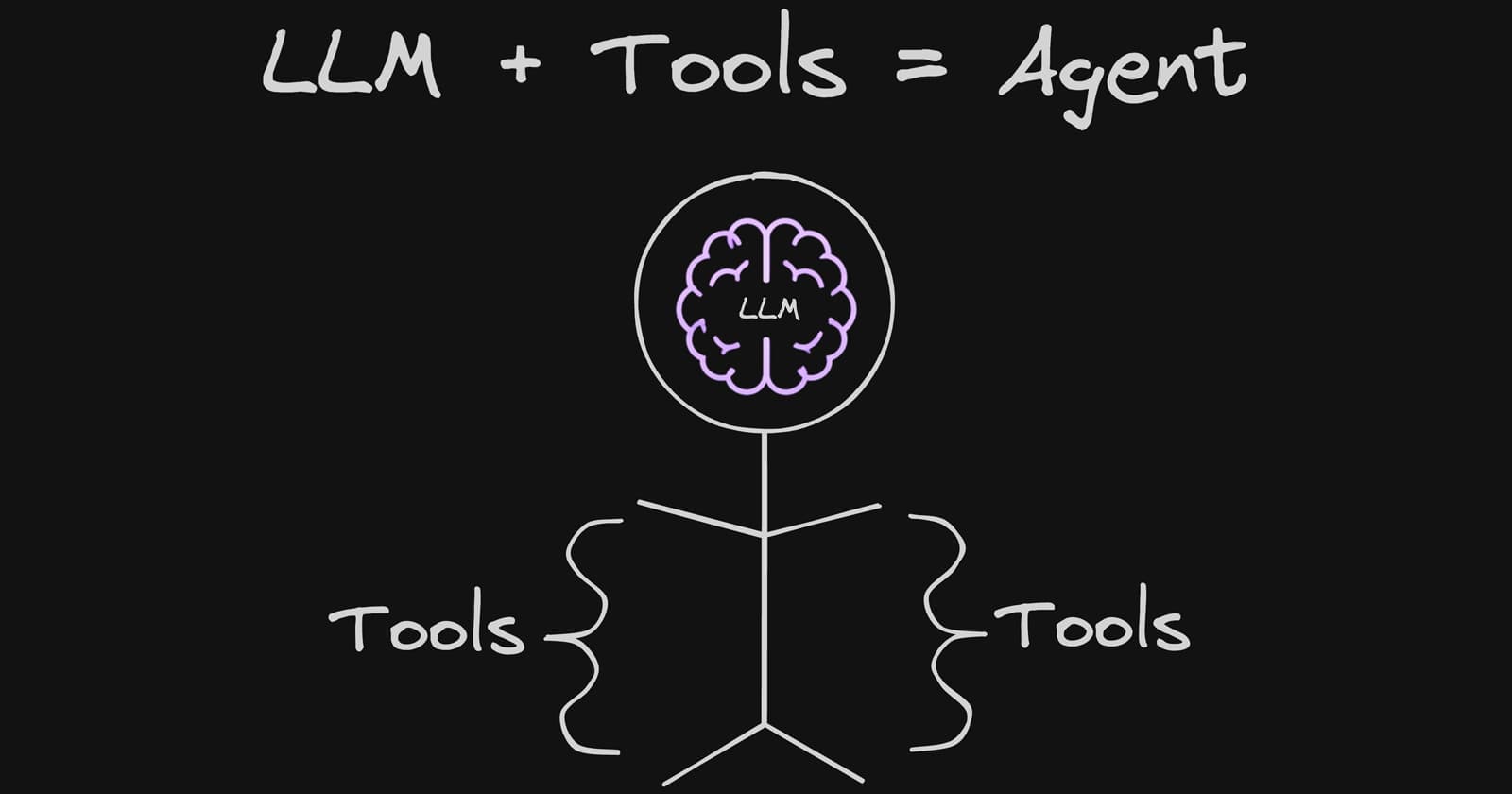

Spoiler: If the LLM is the brain to an agent, then tools are the hands and legs to that agent.

The LLM can understand your request, make decisions, and choose what to do next — like a brain.

But on its own, it cannot directly open a file, browse a website, run code, send an email, check a calendar, or update memory. That is where the hands and legs come in.

That is what tools are for.

A tool is simply a capability the LLM can use to interact with something outside pure text generation.

Some examples of tools:

reading files

writing files

browsing the web

running terminal commands

searching a database

generating images

reading or updating memory

booking flights

checking calendars

sending emails

So if you ask an LLM to summarize a PDF, the LLM itself does not magically “see” the PDF unless it has access to a tool that can read it.

If you ask it to find the cheapest flight, the LLM itself does not inherently know live flight prices unless it has access to a flight search tool.

If you ask it to remember your preference for later, that requires memory capability.

Tools are what let the LLM move from just saying things to actually doing things.

Now we can define it clearly.

An agent is an LLM that has access to tools.

That is the core idea.

Not “an LLM that uses tools every time.”

Not “an LLM that acts fully autonomously.”

Not “an LLM that does something magical.”

Just:

LLM + Tools = Agent

The reason this matters is that an LLM alone can only operate within the space of language generation.

Once you give it tools, it can take action.

It can inspect.

It can fetch.

It can write.

It can speak.

It can search.

It can update.

It can operate across systems.

That is what changes it from being only a language model into an agent.

Specialized Agents

Different agents are useful for different reasons because they are given different tools.

A coding agent may have tools like:

read file

write file

search directories

execute terminal commands

A travel agent may have tools like:

search flights

check hotel availability

inspect seat maps

compare prices

retrieve reviews

A calendar or scheduling agent may have tools like:

read calendar

check availability

create events

send confirmations

A research agent may have tools like:

browse the web

open documents

extract information

cite sources

summarize findings

So when people talk about “agents,” they are usually talking about the same basic pattern:

an LLM connected to a set of tools for a particular job

The LLM provides interpretation and decision-making.

The tools provide action.

Together, they form the agent.

What an Agent is not

An agent is not the same as a perfect autonomous worker.

It is not flawless.

It is not always reliable.

It does not always choose the right tool.

It should not automatically be left unsupervised for important work.

Giving an LLM tools makes it more useful, but it also increases the impact of mistakes. A bad answer is one thing. A bad action is another.

So agents are powerful, but they still need good tool design, sensible permissions, monitoring, and often human oversight.

That does not change the definition. It is just good practical awareness.

How to spot an Agent

The easiest way to spot an agent is to ask: can it do things, or can it only say things?

If it can take actions through tools, it is an agent. That means it can not only understand your goal and plan the steps, but also act on those steps by reading files, browsing the web, editing code, updating memory, calling APIs, or interacting with other systems.

ChatGPT is an agent because the GPT model behind it has access to tools and can use them when needed, even if a particular question is simple enough to answer without calling any tool.

Claude Code is also an agent for the same reason: it is an LLM operating with access to tools, in its case specialized for coding tasks like reading files, editing code, and running commands.

Conclusion

The term “agent” does not need to be mystical or overcomplicated.

An LLM by itself can understand and generate language.

Once you give that LLM access to tools, it becomes an agent.

That is the core idea.

Everything else is just details.

You’re probably already using more Agents than you think. If one came to mind while reading this, share it.